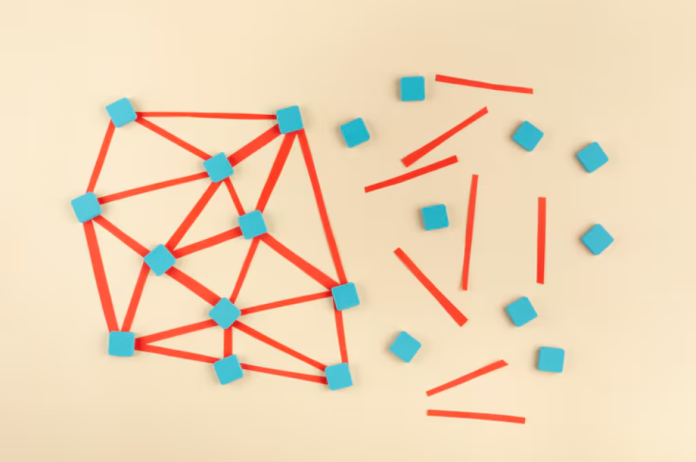

Many real-world datasets show relationships that are neither strictly linear nor completely random. Traditional linear models struggle to capture such patterns, while complex machine learning models often sacrifice interpretability for accuracy. Generalized Additive Models, commonly known as GAMs, address this gap by combining flexibility with transparency. They allow each input variable to influence the outcome through its own smooth function, making the model easier to understand and explain.

A key component behind this flexibility is the use of smoothing kernels, especially local regression splines. These kernels help represent non-linear covariate effects without turning the model into a black box. For learners and practitioners exploring applied statistics or enrolling in a data scientist course in Kolkata, understanding how GAM smoothing kernels work provides a strong foundation for interpretable modelling.

Understanding Generalized Additive Models

A Generalized Additive Model extends the idea of a generalized linear model by replacing fixed linear terms with smooth functions. Instead of assuming that a predictor has a straight-line relationship with the target variable, GAMs let the data determine the shape of that relationship.

Mathematically, a GAM represents the response as a sum of smooth functions of individual predictors. Each function is estimated independently while still contributing to a single coherent model. This structure makes GAMs particularly useful when relationships are non-linear but additive, such as the effect of age, temperature, or income on an outcome.

The simplicity of this additive structure ensures that each variable’s effect can be visualised and interpreted, which is a major reason GAMs are widely used in healthcare, economics, and environmental analysis.

Role of Smoothing Kernels in GAMs

Smoothing kernels are the mechanism that allows GAMs to learn non-linear patterns. A kernel defines how nearby data points influence the estimated value of a smooth function at a given location. In simple terms, kernels control how much “local” information is used when fitting the curve.

If the kernel is too narrow, the model may follow noise and overfit the data. If it is too wide, important patterns may be smoothed out. GAM frameworks automatically balance this trade-off using smoothing parameters, often chosen through cross-validation or penalised likelihood methods.

For students studying statistical modelling or pursuing a data scientist course in Kolkata, grasping the concept of smoothing kernels is essential for understanding how flexibility and stability are managed in non-linear models.

Local Regression Splines Explained

Local regression splines are one of the most commonly used smoothing approaches in GAMs. They work by dividing the range of a predictor variable into small regions using points called knots. Within each region, simple polynomial functions are fitted, and these pieces are then smoothly connected.

Unlike global polynomials, which can behave unpredictably at data extremes, local regression splines adapt to changes in the data gradually. This makes them robust and reliable for modelling real-world patterns. The smoothness of the resulting curve depends on both the number of knots and the penalty applied to excessive curvature.

In practice, analysts rarely choose knots manually. Modern GAM implementations handle this automatically, allowing practitioners to focus on interpretation rather than technical tuning. This ease of use makes local regression splines popular in applied analytics and teaching environments.

Interpretability Benefits of GAM Smoothing

One of the strongest advantages of GAMs with smoothing kernels is interpretability. Each smooth function can be plotted to show how a single predictor affects the response while holding other variables constant. These plots are intuitive and easy to explain to non-technical stakeholders.

For example, a smooth curve might show that risk increases rapidly up to a certain point and then levels off. Such insights are difficult to extract from more complex models like random forests or neural networks. GAMs therefore provide a middle ground between simple linear models and highly flexible machine learning techniques.

This balance is particularly valuable for professionals trained through a data scientist course in Kolkata, where practical decision-making and explainability are often as important as predictive performance.

Practical Applications and Use Cases

GAMs with smoothing kernels are widely applied across industries. In healthcare, they help model non-linear effects of age or biomarkers on disease risk. In finance, they capture changing relationships between economic indicators and market outcomes. In environmental science, they are used to analyse seasonal and spatial patterns in climate data.

Because GAMs are compatible with different response distributions, they can be used for regression, classification, and count data modelling. Their interpretability also makes them suitable for regulatory or high-stakes environments where model transparency is required.

Learning to implement and interpret these models equips analysts with a versatile tool that fits many applied scenarios.

Conclusion

Generalized Additive Model smoothing kernels provide a powerful yet interpretable way to model non-linear relationships. By using local regression splines, GAMs capture complex patterns while maintaining clarity about how each variable influences the outcome. This combination of flexibility and transparency makes them highly valuable in practical data science work.

For those building strong statistical foundations through a data scientist course in Kolkata, mastering GAM smoothing kernels is an important step towards creating models that are not only accurate but also understandable and trustworthy.